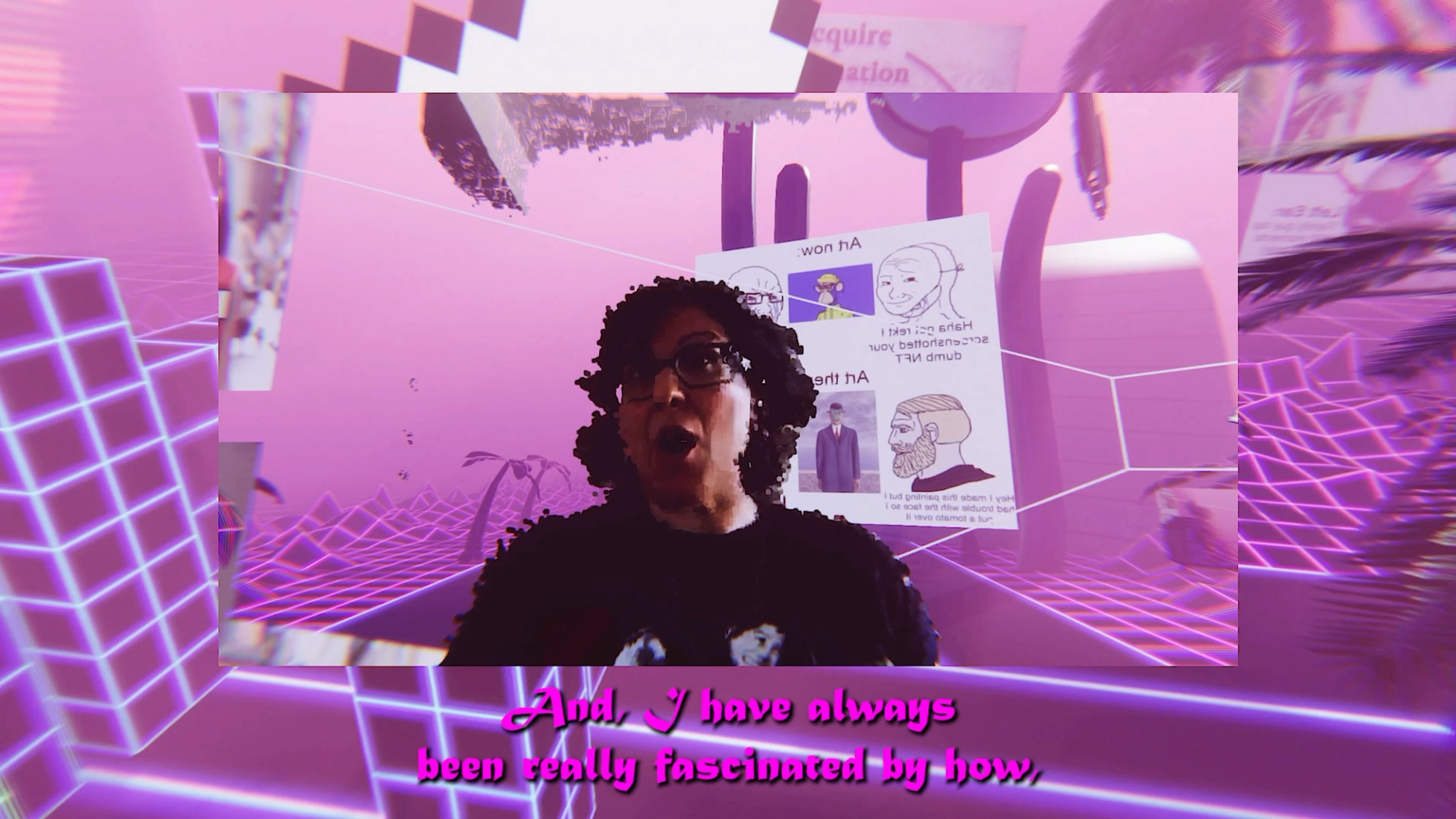

This conversation is the first of a series produced by Me AndOther Me as part of their ongoing WebRTC BackRooms project, a podcast deep-scrolling into XR, AI, and the future of hanging out in the spatial internet, hosted by Koozarch. You can listen to the full episode via our podcast channels or watch the video version on the Me AndOther Me Substack page.

Me AndOther Me Welcome to the Backroom of im/possible image resolutions w/ Rosa Menkman. This is an excerpt from our conversation in the WebRTC BackRooms, a podcast series deep-scrolling into XR, AI, and the future of hanging out in the spatial internet. In this first episode, together with Rosa, we interrogate resolutions, image standards, and politics of seeing. We ask what defines an image, what defines seeing, and what gets erased when technology resolves the world into sight. The discussion follows Rosa Menkman’s long-term research into compression errors, resolution limits, and the technical choices that shape visibility. Resolution is approached as a compromise rather than a neutral condition, shaped by efficiency, standards, and exclusions.

M&OM Let’s start our conversation by talking about the format of this podcast itself. Instead of a flat video call, we’re meeting in a shared point cloud space — multilayered informational images updating in real time from different locations. That means we’re surrounded by streams of depth data — layered visual perspectives that sometimes compress or even collapse. For this format, we chose to create a new space based on Web Real-Time Communication, which is a set of open source APIs that allow peer-to-peer network that goes through our own signaling server, a space that favors opacity in opposition to the logics of exposure. The volumetric data doesn’t get stored; it simply passes through our own private server, avoiding the extractive gaze. From your perspective, what kind of “image” is this?

RM That’s a complex question, but I’ll try. When I look at the image right now, I see my own face, partly broken up by you, by your studio... So in a way, what I’m looking at feels almost architectural. At the same time, it’s clearly not just one image. It’s not a single, stable frame. It’s more like a composite stream, a collage made of different kinds of images layered together. The whole thing is fragmented. It isn’t tied to one optical reality — like my point of view — or to one specific place. There’s a forest. There’s my living room. There’s your studio space. They all coexist in this shifting field. So if we try to define it, the definition keeps sliding. It’s elusive. It depends on where you point your lens, and its politics shift with that position too.

From my perspective, it might be best described as an im/possible image. Not something you could just capture with a phone. It needs the custom pipeline you described — the special app that deliberately uses WebRTC protocols on your own terms rather than through their default settings. What I see is a composite infrastructural image. It’s technically possible, but it exists outside standard builds. It can’t really happen anywhere else right now except here, between us, in this live transmission that bends the usual streaming pipeline. And that bending matters. Normally, images are resolved through compression, storage, and exposure. You’ve built a way around that. You’re refusing that extractive infrastructure. So the image functions less as a representation and more as a demonstration. It shows what an image could be if it were constructed differently. In that sense, it’s a proof of concept. A kind of best practice for thinking about the image as infrastructure.

M&OM I think there’s an urge to really ask what kind of image we’re inhabiting here, especially in this oversaturated image culture we’re living in. That question feels pressing. And it makes me think about how, throughout the history of media, the idea of what an image actually is has kept expanding. We began with representational images, then technical or operational images produced by machines, as Farocki famously told us; later came simulated and computer-generated images. In the internet era, images became networked and often circulate as low-quality copies — what Hito Steyerl calls “poor images,” degraded files that travel quickly. Now we have algorithmically generated pictures and even “invisible” images made by machines for other machines, as Trevor Paglen tells us about. And here we are with volumetric, spatial, real-time images like this point cloud stream. How do you see the current state of the image? Do we need new conceptual tools to understand what an image is today – especially considering the politics of visibility at play, where resolution can dictate how and what we see and what remains unseen?

RM Yeah, when you were asking me, “What is the current state of the image?”, I think the image is no longer just a picture: It is a pipeline. So this renders the image into a non-static or procedural construct, which is sometimes compiled on demand from data or sometimes even printed. We can think about it as something that actually involves different layers and procedures. So it’s very important — and I think this is the main point — that the image is never static anymore, even if it appears that way to our eyes. The image can be many things, depending on the lens you choose. As you’ve already shown, the image is infrastructural, shaped by codecs, standards, and platform governance.

"The image is no longer just a picture: It is a pipeline. So this renders the image into a non-static or procedural construct, which is sometimes compiled on demand from data or sometimes even printed."

But we can also think about it as limited, built from thresholds that decide what appears and what is discarded. As you say, it’s manifold. It’s plural. It can be optical, synthetic, operational, and invisible all at once. We can also consider it as something economic, optimised for speed or for engagement. Besides that, it’s unstable. It can be mutable across contexts, devices, and training sets. And I think most importantly — and this sometimes requires a bit of training to realise — it’s always political. Defaults decide what or whose images are legible or visible, and whose drop out of sight, whether through censorship or shadow banning, for instance. So the current state of the image is something that is negotiated. It’s a negotiated form of visibility, rendered under specific infrastructural constraints.

"Defaults decide what or whose images are legible or visible, and whose drop out of sight, whether through censorship or shadow banning, for instance."

In order to approach the image today, we do need new conceptual tools. Not just new typologies of images, like the poor image or the invisible image. I think we need a more holistic approach. Maybe not a typology, but a topology of image paradigms. Because while a typology puts these images into separate boxes with their own definitions — operational, poor, synthetic, metabolic — a topology changes the question. It shifts it from “What kind of image is this?” to “What is the architecture of the resolution? How is the image resolved?”

Resolution studies, I think, can give us that framework. It helps us point to the rules that govern the images that appear, circulate, and disappear — the ones we keep naming — and it offers a more useful tool to consider them in relation to each other.

M&OM In Beyond Resolution you argue that a resolution is never neutral – it’s a choice that involves trade-offs and even power dynamics. For example, you write that standardising resolutions brings efficiency and order, but also involves compromises and the obfuscation of alternative possibilities. Could you explain what you mean by the “politics of resolution”? How do things as mundane as image formats or screen standards carry biases or hidden assumptions about what should be visible?

RM To me, every resolution is a decision about what is shown, what is allowed to be shown, and how it is seen or received. But these forms always include hidden settings that are treated as neutral. Take something simple like a drop-down menu. It looks like a set of standard options, but every standard already carries a bias. Every format encodes a trade-off. It decides what you keep and what you throw away, where you spend bits and where you save them. In our case, for example, we’re looking at point clouds. The bit depth, the number of points we show, is a trade-off between speed and cost. Another example is JPEG compression, which is one of my favorite subjects. JPEG is still the most widely used compression today. But it was originally tested on a single image — a 1973 Playboy centerfold of a naked Caucasian woman. JPEG relies on a luma model, where light is considered the dominant factor. It assumes the human eye is best at detecting edges of light contrast, so light becomes more important than colour. But the human eye isn’t a one-setting-fits-all system. At low luminance, in darker conditions, the eye’s sensitivity to contrast drops sharply. In bright light, I can see a lot of contrast. In the dark, much less. That means darker images actually require more data for the eye to perceive detail. If a compression algorithm is tested only on a white body under certain lighting conditions, it hasn’t been tested on darker skin tones or shadow-heavy environments. If compression treats light and dark the same way, problems follow. Data gets removed exactly where the eye already struggles. Shadow textures turn into smears. Micro-contrasts disappear. This is something we see in many universal defaults. They are created for one environment and assumed to work everywhere.

In the case of JPEG, I would argue that this allowed racism to enter the image processing pipeline. Standards and settings promise interoperability. But they also fix hierarchies of visibility. Image resolution is never just a number. It’s a site of power. It naturalises political and economic decisions as technical settings. And the more complex our image stacks become, the more invisible these compromises are. They get buried under layers — sensors, codecs, platforms — each adding its own economic and technical biases. This is where resolution studies become a kind of forensic practice. It means uncovering the choices disguised as defaults. It means recognising that standards are black-boxed decisions about what is possible and what is not. And it also means asking where unimplemented or unrealised resolutions might still exist — what other ways of seeing could be imagined.

"Resolution is never just a number. It’s a site of power. It naturalises political and economic decisions as technical settings."

M&OM It sounds like a form of resistance.

RM I think what we’re doing in this interview is also very much a form of resistance. The way you’ve created this software, this image pipeline, shows that — maybe not anyone — but anyone who really wants to can create their own protocols. And by building adaptive protocols, you can demonstrate that things can be done differently. That act alone makes the pipeline, the protocols inside it, and the black-boxed standards more open to dialogue. It opens space for alternatives. It makes them available for scrutiny and for discussion. And I think that’s where resistance actually begins.

M&OM You’ve coined the term im/possible images in your recent research. What are im/possible images, and why are they important to you? We know this thread grew out of your 2019 residency at CERN, exploring “what makes things im/possible”. Can you talk about what an im/possible image might be?

RM Thank you, for pronouncing it correctly — im/possible, with the slash. There’s a split between im- and -possible. That references Roland Barthes’ book S/Z from the 1970s. In that book, Barthes describes the difference between readerly and writerly texts. A readerly text is closed. It carries a single dominant meaning — like a realist novel. A writerly text, on the other hand, is open. It has plural meanings. The reader actively co-produces meaning by navigating different codes and choosing between them. In S/Z, Barthes argues that a text isn’t simply a linear carrier of meaning. It can be more like a networked cloud or object, where the reader’s path determines what gets actualised, what is read, what becomes meaning. Since we’ve established that images are no longer static, I started to wonder whether a similar concept could apply to images and to the image processing pipeline. Every step in that pipeline introduces compromise. And maybe every compromise also involves an excess — of what is possible and what is im/possible. Every choice in a readerly text selects one meaning over another. In the same way, a readerly image makes a decision about what is rendered and what is not rendered.

During my residency at CERN, I formulated a research question I asked every scientist I met. The question was written in scientific terms, but it was speculative:

Imagine you could obtain an im/possible image of any object or phenomenon you consider important, with no limits on spatial, temporal, energy, cost, or signal-to-noise resolution. You could even imagine an image produced beyond resolution — one that defies the usual settings involved in image processing. What image would you create?

From that question, I collected more than eighty different imagined images. I then organised them into a kind of topology of impossibility in relation to images. I found categories where images were im/possible due to technological or low-resolution constraints. Others were im/possible because of belief systems, imagination, or temporal conditions. Some images were once im/possible but have since become possible through technological development. Others were once possible but have become im/possible. And then there were newer paradigms of im/possibility. I called them new complexities of the image, where im/possibility slips in differently. This is where questions around AI begin to emerge.

M&OM Building on this, do you feel many images are “im/possible” less because physics won’t allow them and more because platform policies shut them down: moderation playbooks, codec whitelists, DRM, fixed aspect-ratios/bitrates, proprietary APIs, Content ID takedowns, monetisation filters, auto-flagging, shadowbans? If that’s the case, how do we push back? What would a counter-stack look like in practice: open codecs, user-run servers, alternative resolution standards, P2P pipes, and tools that let artists publish and talk outside the capitalist platform's extractive gaze?

RM Yes, I think that’s such an important question. Over the last years, I’ve really felt — and I think many of us have — that sense of powerlessness growing. As creators, as artists, but also just as people. Whatever we say online feels washed out. It dissolves into platforms. And instead of meaningful exchange, we get waves of violence, outrage, things that are disheartening. It becomes hard to believe in alternative futures or even in small shifts. I’m not saying the future looks bright. But I do think we can’t give in to that feeling of powerlessness. Because that feeling isn’t neutral. It’s produced. It’s shaped by political and economic forces, and by platforms that benefit from keeping us passive. So we have to keep searching for alternatives. When we talk about im/possibility, then, it’s not just physical. It’s infrastructural. I was really struck by Nora O' Murchú’s text The Image as a System, where she writes that the image no longer just represents — it manages us. It determines what kinds of speech appear plausible and what futures seem actionable. We’re no longer outside the image as observers. We are part of it, both audience and actor.

So instead of asking what the image says, we should ask what it does. What actions does it permit? What worlds does it make? What does it make feel inevitable?

She also writes that with governing images, meaning isn’t contested — it’s overwritten. And democracy doesn’t collapse through violence; it dissolves in perfect resolution.

If we take that seriously, then alternative resolution becomes a place where power can still be negotiated. That’s where activism can exist. Where a different articulation is still possible. Many images are technically possible, but operationally impossible. They get washed out of our feeds. And the images that remain possible — the ones that give us the feeling we have a voice — are often the ones that are still allowed. Activism becomes standardised. It becomes blunt. It loses its edge. So we need to renegotiate where action in the image can exist. Where it lies. How we can reclaim it. If we stay inside platforms — and realistically, most of our images live there — then maybe we need to reformat our images. We need to teach infrastructural literacy. We need to make defaults negotiable. Disposable. At the same time, I do believe in building our own tools, our own platforms, our own software. But even from inside dominant systems, I think the key lies in literacy and disposability — in refusing to treat defaults as fixed, and in learning how to shift them.

"Instead of asking what the image says, we should ask what it does. What actions does it permit? What worlds does it make?"

M&OM Speaking about the eye of the platform, or the identity of the platform, makes me think about your other projects — about ways of seeing, mythical forms of perception, and modes of sensing that go beyond the human gaze. Several of your art projects use narrative or myth to explore perception – I’m thinking of works like Refractions and The Shredded Hologram Rose. In Refractions, you constructed a mixed-media installation around the Cyclops myth in a cave in Cyprus, using a fictional one-eyed perspective to inform human perception. And in The Shredded Hologram Rose, you reference the idea of a “Hologram Penrose-Stairs to Nowhere” – an impossible endless staircase – which you frame as what engineers simply call “Progress”. These pieces are evocative and metaphorical. How do mythic narratives help you investigate the limits of vision and resolution? For instance, what does the Cyclops’ single gaze let you reveal about how we construct images? And how does the Penrose stairs – a looping illusion of progress – relate to our pursuit of ever-higher resolutions or new image technologies?

RM I like that you said “a fictional one-eyed perspective,” because I’m not sure it’s purely fictional. I’m thinking more about alternative eyes. We’re surrounded by them — antennas, sensors, radar systems. They’re very singular in how they see. So it really depends on how we define myth, and how we define fiction. For me, myth is a tool for creating lore. And in that sense, an algorithm is always tied to a specific time and place. Algorithms can also become myths. They’re repeated and iterated until they feel normal, standard, indisputable. They turn into their own kind of lore. Through mythology, we write lores. It’s basically code repeated until it feels natural, like a given.

When I began working on Refractions during my EMAP residency with NeMe in Cyprus, I proposed to study contemporary Cyclopes as alternative ways of seeing. And it’s important to note that the Cyclops isn’t singular. The figure mutates across history. The earliest Cyclopes — Brontes, Steropes, and Arges — were the makers of thunder. Later, there are other variations. Odin, for instance, becomes a kind of Cyclops by choice when he trades one eye for knowledge of his own fate. When I went to Cyprus with this idea of contemporary Cyclopes, I found something unexpected. There’s a system called Cyclops — cyclops.cy — a geo-hazard monitoring network that uses corner reflectors and radar passes to detect shifts in the earth over time. It describes itself as monitoring the Earth to protect it for tomorrow. But I also found another Cyclops in Larnaca: a maritime and port surveillance system. It’s currently used in support of Israel’s military operations in Palestine. That made something very clear to me — not all vision is care. Some vision is about control and violence.

Thinking through myth helped me articulate that technology is never neutral. A Cyclopean vision can protect, or it can harm. The Penrose Stairs connects to that idea in another way. For me, it’s a metaphor for progress. Like an Ouroboros, endlessly looping. I first used the Penrose Stairs in a project called Xilitla in 2013. It was a kind of video performance tool — a 3D environment where I mapped video textures onto objects so that videos weren’t confined to flat frames. In that world, the protagonist — the Angel of History, already a Janus figure looking both backward and forward — could climb these stairs. But if she fell off the world, she would respawn at the top of the staircase. So falling down became another way of ascending. It was inverse progress. A loop that keeps us busy, always moving, but never really arriving.

The Shredded Hologram Rose sits inside that same loop. It deals with the layered complexity of the holographic or 3D image. There’s this constant drive to improve, to refine, to achieve the highest resolution. But every new layer of development also breaks something that came before. Progress is always destructive at the same time. So both the Cyclops and the Penrose Stairs help me think about vision and resolution not as neutral improvements, but as structures — mythical, technical, political — that shape how and why we see.

M&OM So my next question stays within that same line of thought, but now in the AI landscape. Social media is already extremely flooded with AI-generated images. A lot of them feel sloppy, repetitive, disposable. And we’re already struggling to make meaningful content visible on these platforms. So what does it mean to flood these spaces even more with generated imagery — especially when we know how easily it can be weaponised by political forces?

Half of the internet is already shaped by bots. Bots simulate trends. They simulate influence. They amplify certain narratives. If we now pair that with image and video generation models that produce extremely realistic content, we move toward an internet that is fully generative — content produced by systems talking to systems. If that’s the case, where do we locate trust? Where do we locate shared knowledge, if images can no longer function as carriers of evidence in the way we once assumed?

Many of us already feel how real events — events that hurt, that matter — get buried under the noise of the clickbait economy. They disappear under waves of distraction and synthetic content. So how do we remain sensible, or even collective, inside a media environment saturated with AI imagery? If we can no longer rely on the image as proof, have we lost trust in images altogether? Or does trust have to be relocated somewhere else?

"How do we remain sensible, or even collective, inside a media environment saturated with AI imagery? If we can no longer rely on the image as proof, have we lost trust in images altogether?"

RM Well, what you see with AI is that we’ve lost the reference to an optical reference entirely, right? That connection that used to make truth related to a form of indexicality has been severed. And that means that the rules of photographic evidence, of forensic evidence-making, have been severely undercut and changed. We see that the internet has become kind of a graveyard of the systems that we worked to get into place, of what forensics with new technologies could be. It’s not just the internet, actually all our technologies now are together putting that knowledge-building capacity to rest. And that is detrimental to our society, to our culture, to our ways of knowledge-making. What comes into place is illegibility and not knowing. So we have to put new systems in place. Systems that fight against the system that is now unknowable in terms of scale and speed. What that means is that I think we need to somehow decelerate. The digital was put into place to speed things up. So we have to find practices that can live and coexist, or even take over, that impose slow use. That implies a type of care. Because care can bring back trust. Care is not fun. It takes time. It requires energy. The amount of work is not necessarily represented in the output that gives pleasure. What could that mean in practice?

As much as it hurts me to say it, I don’t think we can step away from technology, because it’s in our lives. But it means we have to reclaim and remake those technologies. We have to locally train and privately own platforms, as well as AIs where necessary. We have to take control of our own databases. We have to learn how to create our own algorithms. And we have to do that in groups and communities we trust. It’s actually the same answer to a different paradigm shift. It just becomes more dire and more heavy. We also need to keep making space and create spaces, platforms like you do, that make room for what doesn’t fit into the mainstream. In order to resist the normal, the standard forms of rendering, and to step outside of the trap of conformity — which means becoming part of the slop machine.

It doesn’t even matter if we think we’re saying something critical. If our output fits into the slop machine, it becomes slop. And finally, I think it’s important to disconnect sometimes. That’s really hard. It’s also a privilege. But disconnection means taking care of yourself and feeling some type of power that is easily lost inside the AI stream, which moves faster than humans can make sense of. To feel your power, you need to step outside of it. Look at it from a distance. Or step away entirely, just to remain and take care of your own power in that sense.

—

This Conversation is an excerpt from the episode The Backroom of im/possible image resolutions w/Rosa Menkman from the WebRTC BackRooms Podcast by Me AndOther Me. You can listen to the full episode below, through our podcast channels or by watching the video version on the Me AndOtherMe Substack page.

Bios

Me AndOther Me is a new media-driven artistic and architectural research studio exploring the future of our spatial experiences and communication through practical applications of social mixed reality experiences focused on online culture, counter-platforms, and the spatial web. The studio is directed by Innsbruck-based architects, educators and researchers Cenk Güzelis and Anna Pompermaier. They are interested in how social media and the internet have evolved to accommodate online communities in networked virtual spaces that have become alternative places to practice social and cultural activities, and how these virtual spaces affect the architecture of our social lives and social selves.

Rosa Menkman is a Dutch artist and researcher of resolutions. Her recurring artistic protagonist — the Angel of History, inspired by Paul Klee’s 1920 monoprint, Angelus Novus, and conceptualised by Walter Benjamin in 1940 — functions as a foundational framework for her explorations of image technology. In. In her written research, Rosa focuses on noise artifacts that result from accidents in both analog and digital media. As a compendium to this research, she published Glitch Moment/um (INC, 2011), and further explored the politics of image processing in Beyond Resolution (i.R.D., 2020). From 2018 to 2020, Rosa worked as Substitute Professor of Neue Medien & Visuelle Kommunikation at the Kunsthochschule Kassel. Since 2023, she has been running the Im/Possible Lab at HEAD Geneve.

Podcast Credits

Direction & Production: Me AndOther Me

Virtual Camera: Cenk Güzeliş, Luca Lazzari, Viktoria Märkl, Lilly Krüger, Ruben Ungerathen, Adrian Weiss, Linus Memmel

Technical Setup: Me AndOther Me, Luca Lazzari

Sound Design: Paul Böhm aka Brootworth

Audio Mix: Kristaps Andris Austers

Volumetric Streaming: Me AndOther Me, Marek Simonik (Record3D)

Text: Me AndOther Me

Thanks to the ORF III Cultural Advisory Board. Produced with the support of the Federal Ministry for Housing, Arts, Culture, Media and Sport as part of the funding program Pixel, Bytes + Film.